VIPER: Virtual Image Processing Environment for Research

Why?

Much work has gone towards enabling full autonomy for UAS, from trained, convolutional deep learning approaches to navigation to explicit SLAM approaches. However, because of regulatory hurdles and a high cost of failure (collision), developing and especially training new behaviors for UAS can be an expensive and cumbersome process. Developing in simulation, of course, can partially address this problem. In addition, simulation opens the door to learning methodologies, specifically deep reinforcement learning, that require repeated failure and success in the teaching process. If the simulation is close enough to reality, algorithms developed and trained within it can be directly used with minimal modification or re-training.

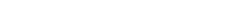

For computer vision tasks, this means that the graphics output of the simulator is indistinguishable, at least from the point of view of the algorithm, to reality. Contemporary robotics simulators, however, focus on the accurate simulation of physics and sensors, leaving graphics for visualization purposes only.

What?

To fill this need, we have developed a simulation environment that combines hardware-in-the-loop UAS simulation with a high-fidelity, photo-realistic graphics output. We call this simulation environment the Virtual Image Processing Environment for Research (“VIPER”). A key element of VIPER that sets it apart from other similar UAS simulators is the integration of the same hardware (i.e., the embedded boards) on which the algorithms would run onboard the UAS. This means that we tackle realism at two levels—both in the simulated camera input as well as the underlying hardware platform. The end goal is truly unplug-and-go usability, with models trained in VIPER immediately usable in the real world.

VIPER allows for the study of both traditional and learned obstacle avoidance and scene reconstruction techniques, as well as enable the training of reinforcement learning algorithms that can be directly transferred to a real system running the same autopilot software. In addition, the principles used in VIPER can quickly be extended to other simulated quantities beyond visible objects, such as RF propagation and chemical plumes.